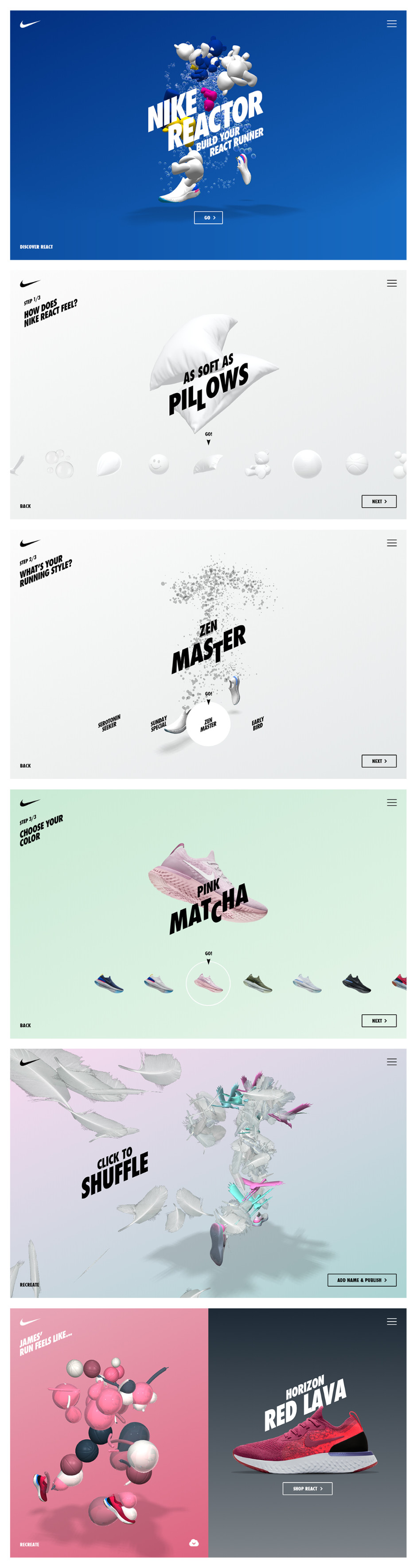

Site of the Month May has been won by DPDK for their 3D WebGL experience that shows us what the new Nike Reactor feel like. Thanks for getting involved in the voting process and spreading the word, the winner of the year's Pro Plan in the awwwards Directory can be found at the end of the article. Now DPDK tells us more about the making of their SOTM winning project.

Nike React.

Nike just brought a revolutionary running innovation on the market, and it's called React. Starting as an in-store activation and spreading as a mobile first website, this experimental themed campaign enables some breathtaking creations, all done in WebGL. Inspired by your experience while running on React, we invite you to visualise these feelings by creating a unique running figure. Offering different categories of analogies: soft, light, responsive and durable items. Combining the items in a customized running figure that suits your style.

Server architecture.

We used Google Compute Engine to setup a 4 webserver cluster running on Ubuntu 16.04. In front of these servers we run a Google HTTPS loadbalancer with healthchecks to make sure all servers are responding as they should. To make the site as fast as possible in any country we also used the Google Cloud CDN which takes care of the static assets. Next to these servers we also run a Database server which stores the runner data and a Video Render server for the Nike React share videos. As for the hosting provider - we host all of our new projects in Google Cloud, so this project was not treated any differently.

Back-end and front-end technologies.

- WebGL animations

All WebGL animations are done using Three.js. This 3D library is used within most of our projects containing WebGL animations.

- Video generation

To generate the videos for a React Runner, we use a Socket server running in Node. On the front-end we capture around 120 frames (the amount of frames depends on the chosen running style). These frames are sent to Node using these web-sockets. Eventually the images are merged to a video using ffmpeg and Node sends a ‘completed’ message back to the front-end using the same socket.

Web stack, frameworks and tools.

At DPDK we switched to a more reliable stack 6 months ago. We were focusing more on re-using code than ever before, to make sure we met our deadline. With this Nike project we also used this stack.The stack is driven by Javascript on thr front-end and, in this case, a PHP back-end. The front-end exists of React as our view layer and Redux as the data store throughout our application. For the WebGL animations we use Three.js, which is used in most of our WebGL applications. All page animations are done by Gsap animations.

The back-end is built using a custom setup with PhalconPHP, which is a PHP framework. Here all the default routes such as robots and sitemap are configured and handled. All API calls from the frond-end end up in our Phalcon Controllers.

What we learnt.

- We learned that generating videos using a sequence of captured images is possible! In an earlier version of the project we had separate geometries and materials, so you could have things like basketball birds or bubble teddy bears. Some of this looked cool, but there were a lot of strange and ugly combination, so in the end we opted for something more grounded.

- There are three separate animation systems built on top of the running cycle. They are all interchangeable bone by bone, and can appear within the same composition

- One of the first motion prototypes came out of the render showing a mannequin running backwards at high speed with her arms waving in different directions, giving a very freaky ‘exorcist’ vibe.

To everyone who voted and tweeted - thanks for showing the love, the winner of the Year's Pro Plan in our Directory is @dubois_norman please DM us your username to activate your prize!