Apr 21, 2021

U.S. Air Force ‘Into The Storm’ by MediaMonks wins Site of the Month March 2021

Massive congratulations to the very talented team at MediaMonks who have won Site of the Month March for Into the Storm, thanks to them, for putting together this detailed case study, and thanks to all of you who voted - the winner of the Pro Plan is named at the end of the article.

On August 8, 2010 a civilian airplane crashed in the Alaskan Chugach mountains. A special operations team in the U.S. Air Force was sent out to bring the survivors back to safety. In Into the Storm, we recreate the entire real-life event digitally, showing you exactly what it takes to be a pararescueman first-hand. Demonstrating a whole new interactive documentary form, Into the Storm educates and inspires people to apply to become a pararescueman themselves.

Trailer video.

Setting the scene

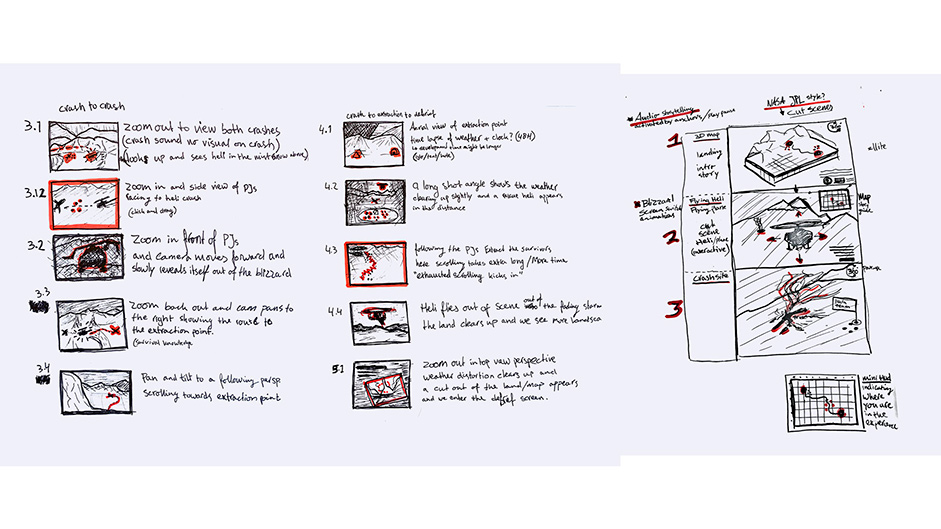

To make this interactive documentary feel as true-to-life as possible, we took a lot of time and care to make sure the facts we were going off, were detailed, and the glacier we were building accurately resembled the one where the rescue mission took place. To achieve a well-rounded and realistic experience, we needed to consider three elements: the story, the environment, and the interactions.

To begin, we wanted to know all the details about the original crash and the rescue mission that were available. We wanted to know what the exact weather conditions were like, the timeline of events, the setbacks each pararescueman faced, and the tools they used to make the rescue a success. Additionally, we wanted to identify the key moments during the rescue that led to their success and all the decisions that led to everyone’s survival.

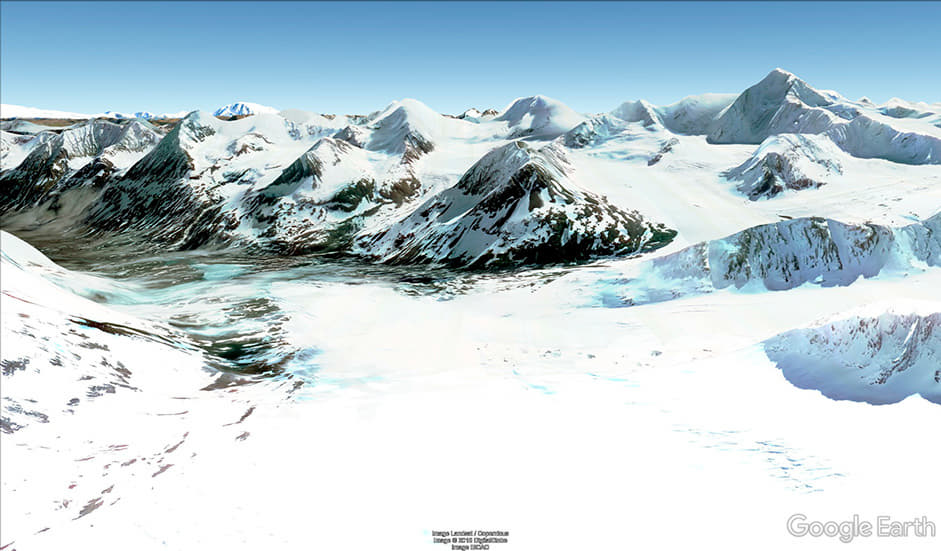

Due to the remote nature of the location, we didn’t have the capabilities to go to the Chugach Mountains and capture real data. We solved this by taking a slightly unconventional approach: taking screen recordings from Google Earth (see Image 2). The shots available from Google Earth already resembled a helicopter hovering over the mountains. We used these images to help us capture parallax pixel information to create a 3D environment. We captured hundreds of images from Google Earth, from as many angles as we could possibly achieve. We were quite surprised at how accurately the shapes of the mountains developed when using a technique called photogrammetry (see Video 3).

Video 3 - The photogrammetry software. Every blue square is a captured position that is calculated and merged into one scene.

Video 4 - Results after calculations where we can see the shapes and textures of the landscape.

We then used specialized software (see video 5) to transform the data to an image called a heightmap. By importing the heightmap into World Machine, we managed to improve the resolution to a much higher 16K output where we could add more detail of landscape erosions, layered textures and sharper mountain shapes. This way, we were able to get closer to ground level with our camera angles without losing quality during the experience.

Video 5 - Adding details in World Machine.

After exporting the high-resolution images, we still needed Clarisse from Isotropix (video 6) to handle the heavy landscape, so we could play with the light and mood of the scene. Part of the lighting was manageable in WebGL, but we also created a base by adding accurate details and shadows that were baked and optimized into one large grid texture setup. Once the landscape of the rescue mission was complete, we could start plotting out our scenes and the route of the mission, based on logs from the original rescue team.

Video 6 - We could change the light of the scene combined with all the details.

Involving the audience in the mission

We always knew that this experience would benefit from interactive moments to allow people to participate in the story and feel directly involved. Since the event was so exciting and interesting, we designed these moments to enable users to discover more details and first-hand accounts about the mission, as well as trigger voice recordings from the actual pararescuemen who were there to take the immersion one extra step further.

To signal those key moments where important decisions or events took place on the mission, we turned them into interactive hotspots where the user is required to work together with the Air Force operations team.

Ensuring the interactions were user-friendly and not distracting from the story, we plotted interactions that would only require a simple gesture, whether the user was on mobile or desktop. They included a scroll, a drag, and explore actions:

Scroll: The user is put in the footsteps of the pararescuemen by having to scroll their way through the long trek, all while facing hazardous weather like headwinds and snowstorms. Scrolling faster decreases the visibility the user has in the windy blizzard conditions to make the conditions feel more life-like and perilous.

Drag: The user has to drag down the helicopter to drop, or drag up to airlift people or supplies. By allowing the user to initiate this step in the mission, they feel more involved in the rescue.

Explore: The user is encouraged to look around the plane wreckage and find the information hotspots along the route that trigger audio clips from the pararescuemen on the real trip, explaining what they used and did.

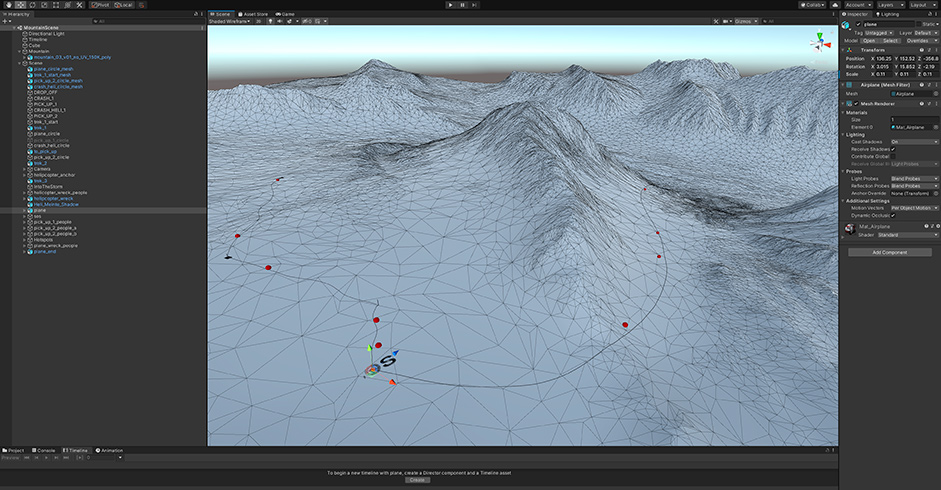

Building the environment

We used Unity to position the various elements of the scene which meant we had to import the 3D models of the landscape, helicopter, plane, and people into Unity. Using the animation timeline feature in Unity, we were able to animate the camera and helicopter. Once complete, both the 3D data and the animations were exported with a custom exporter to a data format that could be easily used in WebGL.

Using WebGL for a dynamic, immersive experience

To create a dynamic, immersive environment, several effects were developed in our custom WebGL framework:

- Dynamic lighting

- Height-based fog

- Dynamic clouds

- A dynamic particle system to create the snow

- Blowing snow near the surface

- Post-production effects, like vignette, contrast, exposure, black and white point, noise and saturation

- Snow-on-lens effect

- Lens flare

- Color grading

Each of these effects were added on a real-world scale to make every scene look as lifelike and physically accurate as possible, helping to create optimum immersion.

In addition, for almost every effect, multiple properties were made adjustable in real-time in the development environment. This made it very easy to create a unique set of presets for each key moment in the experience.

As the user progresses through the story, the presets of the various key moments are seamlessly blended to provide a continuous experience.

Final thoughts

By bringing in-depth research, data and technology together in one project, we were able to build a highly accurate retelling of a real event. The visual complexity mixed with the simple scrolling story mechanic meant it became an experience that was as spectacular as it was emotionally engaging.

About MediaMonks

MediaMonks is a global creative production company that partners with clients across industries and markets to craft amazing work for leading businesses and brands. Its integrated production capabilities span the entire creative spectrum, covering anything you could possibly want from a production partner, and probably more.

Thanks for tweeting and voting, @mohnish_landge you have won the Pro Plan, DM us on Twitter to get your prize! :)