“Russian Pantheon” was designed for the 30th anniversary of the Declaration of the State Sovereignty of Russian Federation Day. The Millennium of Russia monument, located in Novgorod the Great, was chosen as the main object of our web application.

The monument, erected in 1862 at the time of Russian czar Alexander II, was itself an interactive project - at least for its time. The whole century-old history compactly fitted into one monument which you can endlessly look at, each time finding new details. Now any internet user has the opportunity to further explore numerous details of the “Millennium of Russia” monument and obtain complete information about each part and the reason for its inclusion in the composition.

Shooting & Photogrammetry

The Millennium of Russia monument includes 128 characters from Russian history, its height is 15,7 meters and its diameter is 9 meters. We made the shooting with the help of a quadcopter and also by hand. We waited for a cloudy day to shoot so that there wouldn't be too many contrasting shades which could be an obstacle to making a high-quality photogrammetry model. To make each of the 128 figures 100% recognizable and to avoid distortion of the sculptures, we shot them from 20 angles. Large figures of the upper-tier - an angel with a cross and the figure impersonating Russia - were shot from a drone.

Six sculpture groups of the middle tier were shot with a remote control camera fixed on a tripod. We shot the lower tier from head-height without any technical devices like a stand or tripod. In total, we made about 3000 shots from which we made our photogrammetry model that consisted of 5 million polygons with 76 4K textures.

Along with making our photogrammetry model, we were also considering how to make it possible to view such a massive 3D model using a browser - equally fast and with no lag at any internet speed. The final concept of the user interface (3D model gradually moving along with text and on any device) was a real challenge for us, we have never done such a difficult task before and in such a short time (3 weeks).

It became necessary to use the algorithm of loading and unloading of textures from video memory on the base of the THREEJS method.

The first and the main problem was the size. This is not just about the weight of the uploaded resources (more than 700 MG) but also about the video memory size needed for this amount of textures and that would be 6,3 GB. It became necessary to use the algorithm of loading and unloading of textures from video memory on the base of the THREEJS method. Of course, we could limit the zoom function, showing the viewer a low-poly model at some distance and not worry about the detail -. but in that case, the 109 out of 128 characters on a lower tier of the monument would become unrecognizable. That’s why we needed dynamic loading.

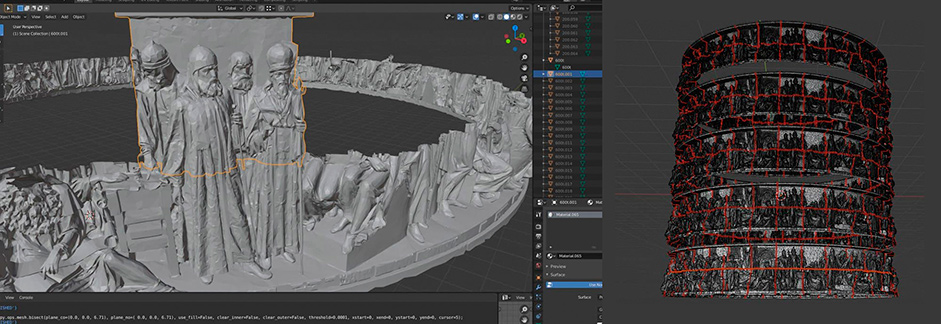

With the help of the BLENDER PYTHON API, the model was cut into tiers and each tier was cut into several segments. Each segment was baked with different levels of detail into a separate GLB file.

Loading the 3D geometry and textures

The algorithm on JAVA-SCRIPT looks like this: on initial page load, all segments of all tiers are loaded with simplified geometry, which corresponds to simplified texture. Then, if while you are scrolling, a segment is in the field of view and is quite close, the bigger texture is loaded for the segment, and after that, the same segment with full geometry and maximum texture is loaded. And vice versa, if a segment is out of view, it is being cashed and the texture is unloaded from video memory and frees it up. And this continues while the viewer scrolls and swipes.

As for the flight around the model, it is nothing more than a camera animation in BLENDER baked into a GLTF file. The scroll event causes GSAP animation which smoothly changes the camera position according to data which comes from the file.

Very often while working on this project we had to feel our way through the process because of lack of time, studying all the necessary materials on the subject as we went along and right away deciding how to use them. In most cases, it was an absolutely new experience for us. The final result was exactly what we had expected - “Russian Pantheon” looks as if a virtual camera controlled by a user goes around the monument one by one focusing on each of its 128 characters. The visual and the text parts of the project are a single entity: the picture and the description are two equal components.

With the help of the most simple method - swipe - the user alternately moves from one fragment of the monument and the relevant text.

While interacting with the project with the help of the most simple method - swipe - the user alternately moves from one fragment of the monument and the relevant text to another. The user experience is based on the most comprehensible logic: “I can see it - I can read about it”. We have ensured that the perception of visual and text information was structured and comfortable.

It was crucial that our project could be viewed on any device with any internet speed equally fast and without lags and delays.

It took only 28 days from the idea of creating such an unusual interactive history textbook to its publication. And still this project remains the most challenging in the history of Gigarama in terms of programming code and 3D modeling.

Technologies

Server-side

Front-end

HTML5, CSS3, JS, Webflow, Three.js, jquery, luxy.js, gsap, webpack.

Company Info

Gigarama is a small team of like minds (there are only 6 of us) who once were carried away by the idea of telling stories with all sorts of web and multimedia technologies. It all started with shooting panoramic high-resolution images which allow you to view a large object or a mass event in all the details. This way “The Broken Heart of Paris” and “Army of Memory” projects appeared. Though very soon we got bored of using this format only. That is why today we are working with lots of interactive techniques including photogrammetry, video 360, FPV-drone shooting, and many others. Verbal components of our projects include texts, infographics, documentary shooting, short video interviews.